Data-efficient

Neural Text Compression

with Interactive Learning

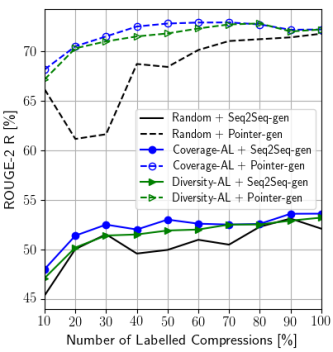

Abstract. Neural sequence-to-sequence models have been successfully applied to text compression. However, these models were trained on huge automatically induced parallel corpora, which are only available for a few domains and tasks. In this paper, we propose a novel interactive setup to neural text compression that enables transferring a model to new domains and compression tasks with minimal human supervision. This is achieved by employing active learning, which intelligently samples from a large pool of unlabeled data. Using this setup, we can successfully adapt a model trained on small data of 40k samples for a headline generation task to a general text compression dataset at an acceptable compression quality with just 500 sampled instances annotated by a human.